- The quick version

- Why AI is not a magic wand

- When it goes wrong

- The rework burden

- What good AI training actually teaches

- Will AI replace my job?

- What is the difference between using AI and knowing how to use AI?

- Our team is already using AI tools on their own. Do we still need formal training?

- How do I measure the ROI of AI training?

- Where should we start?

What Are AI Tools for Productivity and Can They Reduce Your Workload and Give You Your Time Back

The quick version

- AI tools for productivity can genuinely cut busy work — drafting, summarising, data processing, and routine communication

- Australian organisations are already proving that the results are real

- But untrained AI use creates its own rework burden, erasing much of the benefit

- The organisations pulling ahead aren’t the ones with the most sophisticated software — they’re the ones investing in teaching their people how to use it well

If you’ve spent any time in the last two years being told that AI is going to change everything, you’re not alone. The hype has been deafening. But here’s the thing — underneath all of it, there’s something genuinely worth paying attention to. Not because AI is magic, but because the problem it’s trying to solve is very real.

There’s a peculiar kind of exhaustion that comes from working harder and harder without ever getting ahead. You know the kind. Your inbox refills the moment you clear it. The meeting finishes, and now someone needs notes summarised, a follow-up drafted, and a report formatted. You have a list of strategic priorities you genuinely want to work on. But first — the admin.

This isn’t a personal failing — it’s structural, and it’s costing Australian businesses far more than most executives realise.

AI tools for productivity have arrived, promising to fix exactly this, and the promise is real — but there’s a gap between what AI can do and what most teams are actually experiencing, and that gap has a very specific cause.

Australia has a productivity problem that won’t solve itself

Before we talk about AI, it’s worth understanding the context it has arrived into.

According to the Australian Treasury’s Working Future White Paper, Australia’s productivity growth in the decade to 2020 was the slowest in 60 years. McKinsey’s analysis is blunter still: the average American worker now produces roughly 70% more per hour than the average Australian.

This matters because productivity isn’t a macroeconomic abstraction. It’s the mechanism by which organisations grow without simply burning out their people. When it stalls, something has to give — and it’s usually your best employees.

The conversation about AI in the workplace has arrived at exactly this moment. The Tech Council of Australia and Microsoft estimate that generative AI alone could contribute $115 billion annually to the Australian economy by 2030, with 70% of those gains driven by productivity improvements. Separately, Pearson’s research warns that inefficient skills transitions are already costing Australia $104 billion annually — roughly 3.8% of GDP.

What AI actually does to your working day

There’s a lot of noise around AI in the workplace that makes it sound either like magic or a threat. The reality is more interesting than either.

AI doesn’t automate your job. What it primarily does — particularly in knowledge work — is augment your capability, removing the drudgery so you can spend your time on the part that actually requires you.

Think about what a knowledge worker’s day actually contains. Reading lengthy email threads. Summarising a document before a meeting. Drafting a response that requires ten minutes of thinking but two hours of wording. Reformatting data. Writing the first paragraph of something when the blank page is the hardest part.

These tasks are necessary. They’re also, to use a blunt term, busy work. They have to be done, but they’re not where your expertise lives.

AI tools for productivity — particularly tools like Microsoft Copilot, which is embedded directly into the applications most Australian workplaces already use — are genuinely good at this category of work. Summarise this meeting. Draft a response to this email. Pull the key figures from this document. Generate content for the first version of this proposal.

The result isn’t that your job disappears — it’s that you get your time back.

What Australian organisations are actually seeing

This isn’t theoretical — the evidence for AI tools for productivity from Australian organisations is already compelling.

In 2024, the Australian Government ran whatNous Group described as the world’s largest whole-of-government AI trial, deploying Microsoft 365 Copilot across 60+ agencies and more than 7,600 public servants. Participants reported saving up to one hour per day on tasks like summarising documents, drafting correspondence, and searching for information. Eighty-six per cent wanted to continue using it after the trial ended. The Australian Government’s official trial report confirmed that 65% of managers observed a measurable productivity uplift in their teams.

At Westpac, software engineers in a controlled AI coding experiment achieved a 46% increase in productivity, with no drop in code quality. When the bank subsequently rolled AI coding tools out to 800+ engineers, gains settled at a sustained 22–40% improvement in output.

Telstra’s experience is also worth noting. Using AI to assist customer service agents — summarising call transcripts and surfacing relevant information in real time — 90% of agents showed improved effectiveness in trials, with significantly fewer follow-up contacts required.

These aren’t pilot projects or press releases. They are documented, real-world results from Australian organisations that have already done the work.

What most organisations are learning the hard way

So if the results are this clear, why does it feel like most teams are still drowning in admin? Why haven’t the productivity numbers moved nationally? Why are people using AI every day and still finishing work exhausted?

If AI tools for productivity are delivering these kinds of results, why are so many teams not experiencing them?

The answer reveals something fundamental about how AI actually works — and it has a name.

Why AI is not a magic wand

Researchers from Harvard Business School and Boston Consulting Group ran a landmark study testing how 758 BCG consultants performed on tasks with and without AI assistance. What they found wasn’t a smooth performance curve. It was jagged.

For tasks within AI’s capabilities — drafting, summarising, generating creative options — consultants using AI produced output that was 40% higher quality than their unassisted peers, and finished tasks 25% faster.

For tasks outside AI’s capabilities — logical reasoning with embedded ambiguities, verifying citations, evaluating unusual edge cases — consultants using AI were 19 percentage points less likely to produce a correct solution than those working without it. The AI made them worse.

This is the jagged frontier. AI models don’t fail gradually and obviously. They fail confidently and plausibly. They produce polished-sounding text that happens to be wrong. And without training, most people don’t know where the frontier sits.

When it goes wrong

Australia has already seen what this looks like in practice.

Deloitte was commissioned to produce an independent assurance review for the Department of Employment and Workplace Relations — a project worth AU$440,000. The report, later reviewed by independent researchers, was found to contain AI-generated hallucinations: fabricated academic citations and an invented quote attributed to a Federal Court Justice.

Deloitte agreed to a partial refund and had to reissue the work. The reputational cost was significant. The lesson is simple: AI generates plausible text, not necessarily factual text. Without a trained human in the loop, speed becomes liability.

The rework burden

The Deloitte example is dramatic, but the more common cost is quieter.

Workday research found that 37% of Australian employees spend one to two hours every week correcting or rewriting low-quality AI outputs. Time saved by the tool is being eaten by time spent fixing what the tool got wrong.

KPMG and the University of Melbourne found that 59% of Australian employees admit to making mistakes at work due to AI errors — and just 30% say their organisation has a clear policy governing AI use.

Eighty-four per cent of Australian workers are already using AI tools at work, according to Microsoft’s Work Trend Index. The reality of AI in the workplace is that adoption is happening fast while the training largely isn’t — and that gap is the problem.

Why leading organisations are investing in AI upskilling (Not just AI software)

This is the strategic insight that separates organisations getting results from those creating noise about AI in the workplace.

Software is easy to buy — proficiency is the scarce resource.

LinkedIn’s 2026 Jobs on the Rise data identifies AI literacy as the single most in-demand skill in Australia right now. Yet Adecco’s research shows only 25% of workers have completed any formal AI training. The gap between adoption and skill is enormous — and it’s exactly where productivity gains are being left on the table.

The organisations closing that gap are doing specific things.

Westpac didn’t simply roll out Microsoft Copilot to 35,000 employees and move on. The bank built an AI literacy program around it — including “prompt-a-thons” where staff competed to design the most effective prompts for specific business problems, plus masterclasses on understanding AI’s limitations. The documented coding gains above were a direct result of teaching people how to use the tool, not just giving them access to it.

ANZ invested in training 3,000 leaders on AI in a single year through a dedicated AI Immersion Centre. CommBank launched an enterprise-wide “AI for All” training program alongside its OpenAI partnership.

The PwC 2025 AI Jobs Barometer found that productivity growth nearly quadrupled in industries with skilled AI adoption, and that AI-proficient workers command a 56% wage premium. The investment in people isn’t a soft ‘nice-to-have.’ It’s the mechanism that makes the technology work.

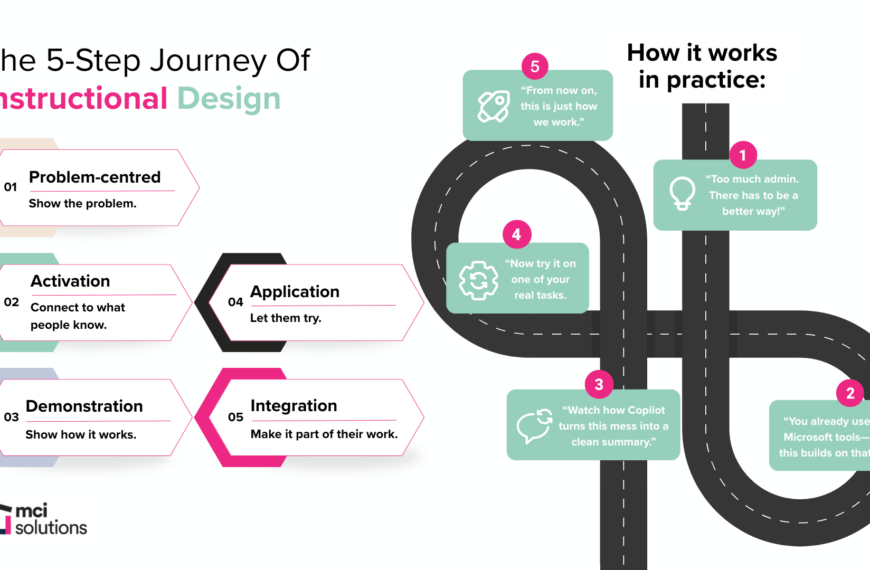

What good AI training actually teaches

Training employees to use AI tools for productivity effectively isn’t complicated, but it is specific. It covers:

- Prompting precisely: How to frame a task so AI produces useful output, not a generic draft that needs to be completely rewritten.

- Knowing the frontier: Recognising which tasks AI handles well (summarising, drafting, formatting) and which require human scrutiny (analysis, verification, nuanced judgment).

- Building verification habits: Treating AI output the way you would treat work from a capable but junior colleague — review before you send.

- Embedding AI into real workflows: Integrating it into the tools and processes your team already uses, not bolting it on as a separate step.

- Understanding AI agents: As organisations move beyond basic prompting toward AI agents that can plan and execute multi-step tasks autonomously, knowing how to manage and direct them becomes a critical skill in its own right.

This isn’t a one-hour lunch-and-learn. It’s structured, practical, and tailored to how your team actually works.

Ready to see what structured AI training looks like?

Here’s the honest truth: the organisations that are winning with AI right now aren’t the ones that found some secret tool — they’re the ones that took the time to train their people properly, and that’s the whole edge.

If your organisation is already using tools like Microsoft Copilot — or considering it — the evidence makes clear that training isn’t optional. The question is how to do it well.

Here at MCI Solutions, we offer Microsoft Copilot training designed for Australian workplaces. The programs are practical, skills-first, and built around the real tasks your team does every day.

To explore what that looks like for your organisation, get in touch with our team or catch up on our AI in Action recorded webinar before you commit to anything.

The organisations that will look back on this period as a turning point are not the ones that bought the most software. They are the ones who built the most capable people.

Frequently asked questions

Will AI replace my job?

The research is fairly consistent on this: in most knowledge work roles, AI augments rather than replaces. It removes low-value, repetitive tasks so you can spend more time on work that requires your judgment, relationships, and expertise. Jobs and Skills Australia has specifically found that generative AI is more likely to augment complex occupations than automate them. The professionals most at risk aren’t those whose jobs involve sophisticated thinking — they are those who refuse to build AI literacy while others around them do.

What is the difference between using AI and knowing how to use AI?

A significant one. The Harvard/BCG Jagged Frontier study found that using AI on the wrong type of task can actively reduce the quality of your work. Knowing how to use AI means understanding which tasks it handles well, how to prompt it effectively, and how to verify outputs before they cause problems. That’s a trainable, practical skill set — not the same as simply having access to a tool.

Our team is already using AI tools on their own. Do we still need formal training?

Almost certainly yes. KPMG and the University of Melbourne found that 48% of Australian employees are using AI in the workplace in ways that contravene their own company’s policies — often because no clear guidance exists. Informal, unsanctioned AI use creates data risk, inconsistent output quality, and productivity gains that evaporate when the person leaves. Structured training creates shared standards and organisational capability that compounds over time.

How do I measure the ROI of AI training?

Start by measuring where your team’s time currently goes. How many hours per week are spent on tasks that AI tools for productivity handle well — summarising, drafting, reformatting, searching? Multiply by your average hourly cost. Then apply a conservative gain. Westpac’s real-world figure was 22–40% for coding; the Australian Government trial saved up to an hour per day on specific tasks. A Forrester study commissioned by Microsoft found Microsoft 365 Copilot delivers 116% ROI over three years, with employees saving nine hours per month on average. Training is what realises that potential.

Where should we start?

Start with the tools your team already uses. If you’re a Microsoft 365 shop, Copilot is the logical entry point — it lives inside Word, Outlook, Teams, and Excel, so the learning applies directly to your existing workflows. From there, identify the highest-volume, lowest-complexity tasks your team handles every week. Those are the best candidates for AI assistance, and they make the immediate ROI most visible.

MCI Solutions is an Australian corporate training provider specialising in workplace upskilling, leadership development, and digital capability programs. Our Microsoft Copilot training is available for individuals and teams. Contact us to discuss your organisation’s needs.